Dr. AI Ain't So Bad

On danger and disingenuousness

(Do you like good doctors? Of course you do. Good doctors are great. In today’s letter, I examine the comparisons of AI to bad doctors – who number far too many – and a bad medical establishment.)

On December 5, 2011, Wired ran an article about Afshad Mistri – Apple’s healthcare lead – and his work traveling around the US to promote the iPad to physicians. The article was noteworthy because the iPad was (at least, on paper) a consumer device that the FDA had not approved.

Over time, Apple managed to boost and solidify doctor demand for iPads in their practices while escaping direct FDA regulation of the iPad. While the apps that run on an iPad may be subject to FDA regulation if they are intended to diagnose, treat, cure, mitigate, or prevent disease, iPads themselves are no more medical devices than a desktop PC sitting on a medical assistant’s desk is. They’re just more portable. A tool used for accessibility.

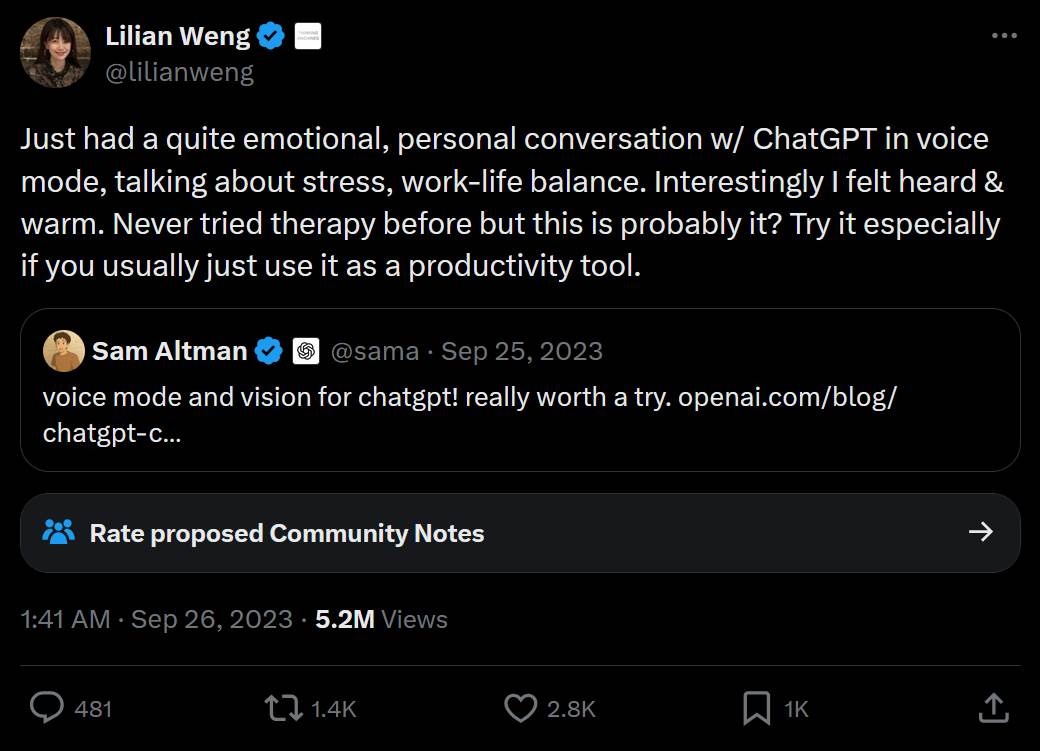

Fast forward to September 26, 2023. Sam Altman had just announced the release of voice mode for ChatGPT the day prior. Sharing Altman’s announcement, OpenAI employee Lillian Weng posted a public X post touting a psychotherapy use case for ChatGPT.

“Just had a quite emotional, personal conversation w/ ChatGPT in voice mode, talking about stress, work-life balance. Interestingly I felt heard & warm,” Weng posted. “Never tried therapy before but this is probably it? Try it especially if you usually just use it as a productivity tool.”

Put another way: An employee appeared to be openly promoting the company’s product – which has been neither evaluated nor approved by the FDA – as a mental health therapeutic.

The chaser: Weng was, at the time, OpenAI’s Head of Safety Systems.

(Many people noticed Weng’s impropriety. Just over 14 hours later, Weng added a disclaimer that read: “How people interact with AI models differs. Statements are just my personal take. 🙏”)

Setting aside governance and compliance, though, was Weng factually or sentimentally wrong?

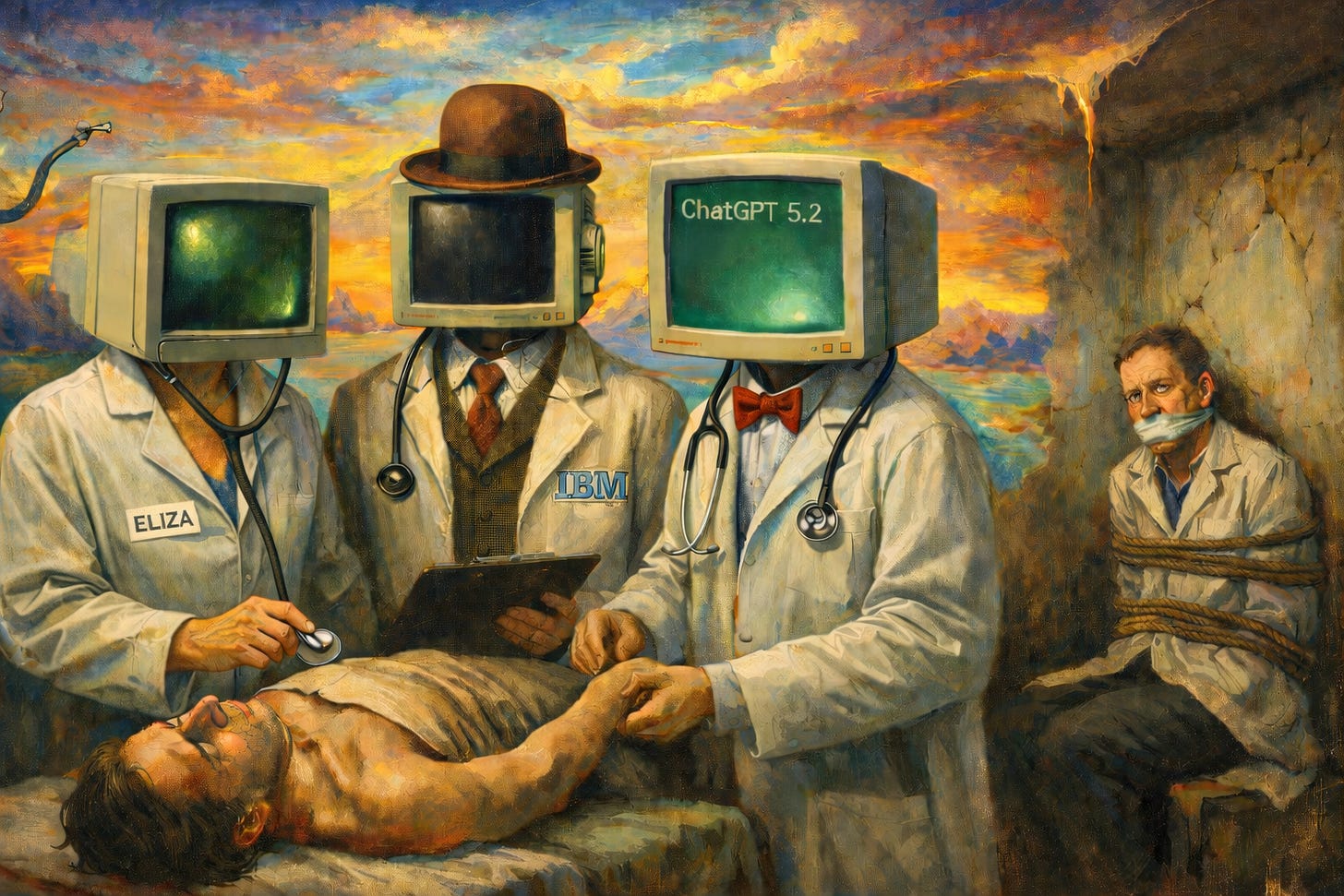

Remember that conversational AI began in 1966 with ELIZA, which itself was a therapy chatbot – albeit a parody of one, relying heavily on Rogerian tropes like “How do you feel about that?”

In ChatGPT’s case, before Weng published her controversial X posts, people had reportedly already begun using ChatGPT for therapy – with positive results. Vice published an article earlier that year publicizing positive therapeutic impacts ChatGPT had had and could yet further have; after all, many take the view that democratizing mental healthcare is an overall good, even if digital mental healthcare is imperfect.

At the same time, the Vice article presented some doubts, cautioning that “in Belgium, a widow told the press that her husband killed himself after an AI chatbot encouraged him to do so.”

But it seems the example was either poorly researched or disingenuously cherrypicked. The AI chatbot in that case (which was not ChatGPT) wasn’t being used for therapy; the suicide victim in that case was merely seeking companionship – a use case the chatbot had been marketed for.

The article also quoted a psychologist who (1) warned against using GenAI for medical or diagnostic advice and (2) bemoaned the potential damage to “the positive relationship of trust between therapists and patients.”

Since then, the popularity of the medical use case for ChatGPT and other GenAI tools has expanded – and far beyond mental health alone. Recognizing this, OpenAI announced ChatGPT Health – a dedicated feature for integrating and analyzing users’ health and medical data – on January 7, 2026.

Meanwhile, mainstream sentiments opposing patient-facing healthcare AI have become more pronounced.

LLMs and Dangers and Risks, Oh My

On February 9, 2026, Nature Medicine published a study from the University of Oxford that, the school’s press team claims, “warns of risks in AI chatbots giving medical advice”.

An Oxford blog-post-cum-press release sums up the study’s key finding accordingly: “that LLMs were no better than traditional methods” in identifying possible health conditions and appropriate courses of action based on symptoms and histories. The paper itself, however, goes further – noting explicitly that the control group outperformed the AI-using group.

“These findings highlight the difficulty of building AI systems that can genuinely support people in sensitive, high-stakes areas like health,” the school’s press release quotes study co-author Rebecca Payne as saying. “Patients need to be aware that asking a large language model about their symptoms can be dangerous, giving wrong diagnoses and failing to recognise when urgent help is needed.”

Dangerous, you say?

Let’s explore.

Someone’s Been Talking to Doctor Watson

AI in the 21st century owes a lot to its use cases in healthcare.

In February 2011, three special episodes of Jeopardy! aired in which IBM publicly demonstrated an AI-like program called Watson. Watson competed against record-setting Jeopardy! champions Ken Jennings and Brad Rutter – and trounced them.

At the time, IBM was loath to use the term “AI” to describe Watson, preferring the terminology “question-answering system” – though that would eventually change. In any case, the 2011 Jeopardy!-playing incarnation of Watson bore two key similarities to modern LLMs:

It used natural-language processing to interpret inputs; it was even able to interpret funnily worded Jeopardy! clues.

It worked probabilistically. It even had an answer panel displaying its top answer choices and respective confidence percentages. Watson was programmed to buzz in only if its confidence score for its top answer was above a certain percentage.

After the Jeopardy! episodes aired, this latter feature attracted many in the medical field – who saw the Watson’s potential value for differential diagnostics. Within a few years, doctors were successfully using Watson for this purpose in diagnosing rare diseases. Today, medical students are being trained in using AI tools – including GenAI tools like ChatGPT – to enhance diagnostics.

At the same time, the medical profession is feverishly warning us plebes against so much as thinking about using AI in any medical context.

You know, just like when MDs and NPs roll their eyes at you and make a snarky little “Someone’s been talking to Doctor Google” comment when you dare to say that you took the awful, awful step of bothering to research your own symptoms and condition.

Sorry, do I sound bitter? Whoops. Take note of my bias as we pull back and dive into what actually happened in this study.

Diving Deeper into the Oxford Study

To better understand the problematic nature of this hypocritical elitism, let’s understand the Oxford study – both how it was conducted and its actual findings.

To wit, here are my problems with it:

1. The study was outdated the moment it was published.

Participants not in the control group were randomly assigned either ChatGPT-4o, Llama 3, or Command R+. And then the authors and Nature alike had the chutzpah to publish this passage in the study: “[P]roviding people with current generations of LLMs does not appear to improve their understanding of medical information.” (Emphasis mine.)

In case you’re not clear on why that’s bullshit, as of press time, ChatGPT is currently on version 5.2, while Llama is currently on version 4. Again, this study was published in February 2026.

Understanding the writing up of the study combined with the journal-submission and peer-review processes may take a long time, that sentence is an embarrassment – highlighting that medical research is not keeping up with the technology it purports to assess.

That said, this alone does not sink the study. Heck, I’m one of those people who thinks ChatGPT-4o was largely superior to ChatGPT 5.x. So let’s dive deeper into the bullshit.

2. The study’s conclusions are irrelevant because of the participant selection.

Too many of the AI-group participants in the study aren’t GenAI/LLM users to begin with. And it gets worse.

More than 25% of the non-control group (i.e., the AI-using group) have never used GenAI or LLMs before; an additional 20% reported using GenAI/LLMs less than once per month.

Even more distressingly, many of the participants – more than 18% of the AI-using group – use the internet less than daily.

(To put that in statistical perspective, in 2019, only 13% of adults in Great Britain failed to use the internet every or nearly every day – and that was pre-pandemic. It is hard to imagine that figure has gone up since before COVID.)

It’s 2026. Ergo, you can’t properly claim that a study shows that people shouldn’t trust GenAI/LLMs with their health decisions when the study includes a substantial portion of people who don’t or barely use GenAI/LLMs to begin with – especially because those people aren’t the kind of people who would be thinking about using AI tools anyway.

This is like conducting a study on how effective driving a car is to get you to the doctor’s office and having 25% of your non-control-group participants be people who don’t have driver’s licenses.

3. The study is more about AI-prompting skill than anything else.

To this extent, the authors even acknowledge in the study repeatedly that many of the problems participants had in getting accurate and optimal responses from the AI came from poor prompting, submitting incomplete information, and a failure to follow recommendations – “suggesting that the performance issues when pairing participants with LLMs may be attributed to human–LLM interaction failure.”

Meanwhile, the study’s authors make much of the fact that GenAI tools performed well when fed the scenarios directly instead of via role-playing participants, “correctly identifying conditions in 94.9% of cases and disposition in 56.3% on average.” (For you laypeople, “disposition” means whether you should do self-care or go to a general practitioner, urgent care, emergency room, etc.)

In other words, entering optimal prompts with complete information helped.

So, yes, tools can be ineffective and “unsafe” when used incorrectly or suboptimally. Apparently, it took the University of Oxford to tell us this.

But the authors seemingly go out of their way to avoid talking about how useful (or, if you like, “safe”) AI is when put into the hands of capable users.

This is emblematic of the underlying conflict feeding into the AI-for-patients question – that many doctors don’t see patients as anything approaching capable. And it leads me to…

My Fourth Problem with the Oxford Study

In the Supplementary Materials, the authors note that women performed better across the board – both in the GenAI group and in the control group – than men did in correctly identifying conditions and dispositions.

That fact both (1) is unsurprising and (2) highlights exactly why GenAI is a useful and helpful healthcare tool for laypeople.

Why? Because women are used to trying to become experts on their health to justify themselves to crappy doctors.

Medicine is a field in which many doctors routinely secretly label female patients with legitimate health problems “WW” or “TW”. “WW” stands for “Whiny Woman.” “TW” stands for “Train Wreck.”

Charming.

Women are used to going to the doctor with health complaints only to usually be told one or more of the following three things:

(1) “That’s just your period.”

(2) “You need to go on The Pill.”

(3) “Just lose weight.” (This includes women whose health complaint is “I can’t seem to lose weight.”)

Men, of course, get medically gaslit too from time to time (I’ve certainly got some stories). But women commonly and systemically have to go through a lifetime of medical gaslighting, prompting them to either miserably give up on resolving their health issues and/or document and research the hell of them to become subject-matter experts.

So of course they scored better in a study measuring medical-diagnostic ability – GenAI or no.

The Case for Doctor AI

This is part of why we need democratizing, non-gatekeeping tools like GenAI – to make real, non-bullshitty DDxing available to laypeople.

And why not? LLMs have been trained on more scientific and medical research than the entire staff of a medical practice could hope to ever read.

Meanwhile, 60% of Americans have at least one chronic disease – and 90% of the money spent on US healthcare was spent for their care.

Yet many doctors – the ones who gaslight patients, ignore root causes, and prescribe whatever the cute blonde with the rolling suitcase tells them in the five minutes they spend barely listening to the patient – sure do hate it when a patient researches their own symptoms and conditions.

Accordingly, the medical establishment has been making much of a case published last year in which a man gave himself bromide poisoning after replacing sodium chloride – salt – in his diet with sodium bromide. The man allegedly made this dietary substitution after asking ChatGPT for an alternative to salt.

(ChatGPT’s alleged recommendation, it would seem, based on educated speculation, was likely based on the fact that sodium bromide can reportedly be substituted for sodium chloride in a chemistry lab because both substances react similarly.)

The moral of the story, according to the zeitgeist: That’s why you shouldn’t take medical advice from ChatGPT!

This, too, is bullshitty.

Firstly, nutrition and figuring out a low-sodium diet is not quite “medical advice” (otherwise, people routinely are getting “medical advice” from morning television, checkout-line magazines, and other pop-culture channels).

Secondly, the man’s ChatGPT logs have not been reviewed or revealed. We have no idea if he asked, “Hi, ChatGPT. What should I eat instead of salt?” or if he instead asked “What’s something that’s chemically similar to sodium chloride?” or anything else in between or entirely separate. The anecdote represents incomplete data, and scientists are usually warning us about that sort of thing.

To this end, people are either competent to use a tool or they are not; there’s no accounting for PEBKAC errors (IYKYK), and those with technological illiteracy make far dumber (though usually less costly) mistakes about everyday technology.

Am I being a jerk? Maybe. But GenAI works probabilistically. Faced with “Can you recommend alternatives to salt?” or a similarly phrased question, absent other specific context, GenAI will generally assume the conversation is about salt in the context of eating. (Accordingly, I would be willing to bet on Polymarket or Kalshi that something really screwy happened with the user inputs here.)

And, because GenAI works probabilistically, it often (not always, but often) gets diagnostics right when prompted well – especially for those patients suffering from more esoteric problems that a jaded human doctor would never think to diagnose. Medical students and young doctors are taught the aphorism “When you hear hoofbeats, think of horses, not zebras” – but too often, this gets misinterpreted as “Zebras don’t exist.” Fortunately, for many real-life patients who have gotten accurate diagnoses from GenAI they otherwise never would have received, this is one bit of human bias that GenAI seems to largely lack.

Dangerous to Whom?

History is full of examples of elites warning commonfolk would put themselves at danger by reading and researching things for themselves. For a couple of examples, let’s go back to the 16th century: In 1543 in England, Parliament passed The Act for the Advancement of the True Religion – placing bans and restrictions on the laity and women (described in the law as “lower sortes”) reading Scripture that had been translated into English because reading about their own religion for themselves would present a “Danger to” them. Later that same century, the Council of Trent warned that, without restriction and supervision, “more harm than good” would result from translating Scripture into the vernacular for the laity – for the same reason.

Was this trend really about the struggle for individuals’ souls? Or was it, more likely, about maintaining control?

What’s really going on here is that the medical establishment sees GenAI as an existential threat to themselves, not to public health. The ridiculously extreme case above about bromism – which is not at all an example of true medical advice – is the best “case in point” that anyone on that side of the argument can find. It’s desperate, it’s pathetic, and it exposes medical elites’ underlying fear of losing relevance.

Meanwhile, for every tale of someone damn near killing themselves because they went to GenAI for NOT MEDICAL ADVICE BUT GENERIC HIGH-LEVEL NUTRITIONAL ADVICE (by my count, so far, that’s one case we know of), there are many more of people gaining real insight into their health conditions and even improving their health.

I know people in this boat, and I am one of the people in this boat.

The insult upon injury in this whole situation is that, to make these points, I find myself looking like one of those obnoxious 996 AI-booster bros. And I’m not. AI – generative or otherwise – is no panacea. Of course it makes mistakes. Of course there are all kinds of risks to using AI in the medical context. Of course we shouldn’t replace human doctors – or other humans – with AI.

Real doctors are human. I get that.

But so are patients.

If GenAI can mitigate if not rid us of the problems of institutionalized healthcare – the gatekeeping, the gaslighting, the gross attitudes about us – then maybe it can and should be part of the way forward to improving health outcomes.

(Disclaimer: I am not a medical doctor. Regular readers are used to my disclaimers warning against taking legal or investment advice from this newsletter. Suffice to say, if you take medical advice from this newsletter, you probably genuinely should get your head examined.)

Did this letter resonate? Do you disagree? Got your own experience with doctors and/or AI to share? Let me know in the comments or send me a message. -JS